The Rise (and Fall?) of Artificial General Intelligence: 2022 and Beyond

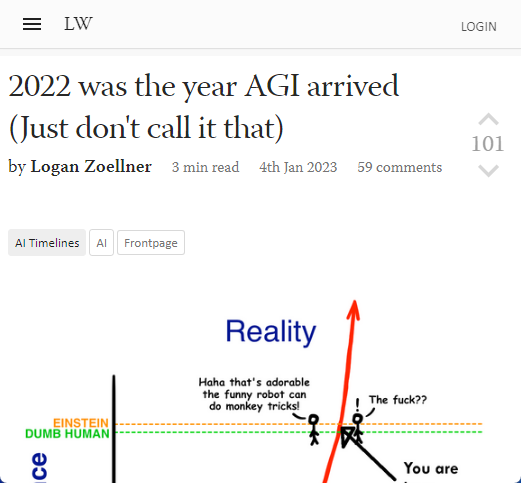

AGI will arrive in 2022 (but don’t call them that).

I don’t really care about IQ test results; ChatGPT doesn’t perform at human level. I’ve spent a lot of time with it. It can sometimes sound like a person with an IQ around 83. It can sound like a person with an IQ much higher than that, and a lot of naive preconceptions. If you force it to think and take it outside of its comfort zone, it will sound more like a person with severe brain damage. It can be taken through a series of simple inferences and give a pattern-matched, obviously incorrect answer. It would have been nice to save what it said about cooking and neutrons. It became obvious that the model was not based on a physical world model.

Other examples have been cherry-picked. After prompting DALL-E and Stable Diffusion a lot, I am pretty sure those drawings were heavily cherry-picked. Normally, you get a couple of things that match the prompt plus a bunch that don’t really fit, as well as a little eldritch terror. This doesn’t happen when you ask someone to draw something. Not even a child. You don’t need to repeat the prompt as much when you ask a person to draw something.

Competitive coding involves a carefully selected problem. It is as simple as it gets. The tasks are well-defined, written in a way that sounds like code, and include comprehensive test cases. The \”coding assistants\”, on the other hand are annoying people with really stupid bugs in their output.

Source:

https://www.lesswrong.com/posts/HguqQSY8mR7NxGopc/2022-was-the-year-agi-arrived-just-don-t-call-it-that